A new class of AI for advanced manufacturing

This paper introduces Deep Technology Models (DTMs) — a new class of AI system designed to compress technology innovation cycles by 100–1000× across the world’s most physically and computationally complex industries. It defines the architecture, explains why the underlying technologies have only now matured to make DTMs feasible, and lays out concrete use cases across semiconductors, biotechnology, energy, and space.

Vasu Kalidindi

Cofounder & CTO, Multiscale Technologies, Inc.

EXECUTIVE SUMMARY

The next frontier in AI is not another chatbot.

For the past three years, the world’s attention in artificial intelligence has been concentrated on a single class of system: large language models trained on internet-scale text. The progress has been remarkable. The narrowness has been overlooked. LLMs are extraordinary at reasoning over the corpus of human writing. They are not, on their own, capable of solving the hardest problems in advanced manufacturing — the problems that determine whether the next generation of microprocessors, batteries, drugs, reactors, and spacecraft can actually be built.

Those problems live in physics, not in language. They require multi-scale reasoning across atoms, microstructures, components, and systems. They require the integration of decades of proprietary domain expertise with petabyte-scale industrial data. They require the ability to act, not merely to describe. Solving them at the speed industry now demands requires a new class of AI.

We call this class of system the Deep Technology Model — DTM. A DTM is the synthesis of three substrates that have only recently matured at the same time: large language models capable of technical reasoning; digital twins capable of running calibrated physics on real industrial data; and agentic AI capable of taking real action over real workflows. None of these substrates is sufficient on its own. Their combination is.

Multiscale Technologies has demonstrated the DTM thesis in semiconductor manufacturing, in production deployment with three of the largest US semiconductor leaders — a memory leader, a logic leader, and a top-tier global materials supplier. The same architecture, applied with the same partnership model, extends naturally to biotechnology, energy, and space exploration. This paper describes how.

The defining property of a DTM is not its scale. It is its integration: physics, data, language, and action — fused into a single system the engineering team can talk to.

01 · THE PROBLEM

The innovation bottleneck in advanced manufacturing

Advanced manufacturing is the part of the economy where atoms still matter. Semiconductors, biopharmaceuticals, energy systems, aerospace structures, and the equipment used to make all of them are produced by physical processes whose performance depends on phenomena spanning fifteen orders of magnitude — from the angstrom-scale arrangement of dopants in a transistor channel to the meter-scale warpage of a 12-inch wafer.

The economic consequence of this complexity is that technology innovation in these industries is slow. A new semiconductor node requires roughly five years and tens of billions of dollars to bring to high-volume manufacturing. A new battery chemistry takes a decade from cell-level proof to gigafactory yield. A new drug delivery platform takes longer still. A new reactor design takes a generation. The bottleneck is not curiosity. It is the rate at which an organization can iterate across the coupled physics, chemistry, materials, and process variables that determine whether something works.

Why pure machine learning falls short

Standard machine learning approaches — even the most sophisticated deep neural networks — fail on these problems for three structural reasons. First, the training data is sparse. A fab may produce billions of measurements per day, but the label is yield, and the yield signal is rare and lagging. Second, the regions of interest are typically out-of-distribution. The whole point of innovation is to operate where no prior data exists. Third, the underlying physics imposes hard constraints — conservation laws, equations of state, irreversible chemistry — that an unconstrained model is free to violate. A model that violates physics is a model that no engineering team will trust with a yield decision.

Why pure simulation falls short

Pure first-principles simulation has the opposite problem. The physics is correct, but the calibration to a real industrial system is incomplete. A density-functional-theory calculation can predict the formation energy of a defect with high accuracy; it cannot predict the defect density of a specific tool on a specific Tuesday. The compute cost of running fully resolved multi-scale simulation across the parameter space of an industrial process is prohibitive. And critically, simulation alone does not learn — every problem starts from scratch.

Why neither LLMs nor digital twins are enough

The two most discussed AI capabilities of recent years — LLMs and digital twins — each address a piece of this gap, but neither closes it. An LLM can reason fluently about a process, summarize a runsheet, and propose a hypothesis, but it cannot evaluate that hypothesis against the calibrated physics of a specific tool. A digital twin can simulate the calibrated physics, but it cannot reason about which experiment to run, explain its results in plain language, or take action. Solving advanced-manufacturing problems at the rate the world now requires demands a system that does all of these things at once. That is what a DTM is.

02 · DEFINITION

What is a Deep Technology Model?

A Deep Technology Model is an AI system that integrates three substrates — calibrated multi-scale physics, large language models, and agentic orchestration — into a single, deployable architecture for solving the hardest problems in a given technology domain.

The word “deep” refers not to network depth but to the depth of the technology stack the model reasons over: from atomic-scale physical chemistry, through process equipment behavior, to factory-scale yield, to cross-product portfolio decisions. The word “technology” refers to the substantive domain — the actual physics, chemistry, materials, and process engineering of an industry — rather than to the AI methodology itself. A DTM is named for what it knows, not for what it is built from.

The three substrates

DTMs are made possible by the simultaneous maturation of three previously separate AI capabilities. Each substrate has a long technical lineage; what is new is that all three are now production-ready at the same time, on the same hardware, with the same data interfaces.

- Large Language Models. Modern frontier models can reason over technical literature, runsheets, defect taxonomies, and engineering documentation in ways that were not possible even three years ago. They provide the natural-language surface that lets engineers interact with an industrial system without writing code.

- Digital Twins. Hybrid physics–machine-learning models — Bayesian where the physics is uncertain, deterministic where it is not — can now run at full plant scale on commodity GPUs. They give the system its grounding: outputs that respect conservation laws, calibrated to the specific tools and processes of a specific industrial site.

- Agentic AI. Tool-using, multi-step agents that can plan, execute, and verify against ground truth have moved from research demos to production deployments. They give the system its hands: the ability to query a database, run a simulation, adjust a setpoint, and return with an answer.

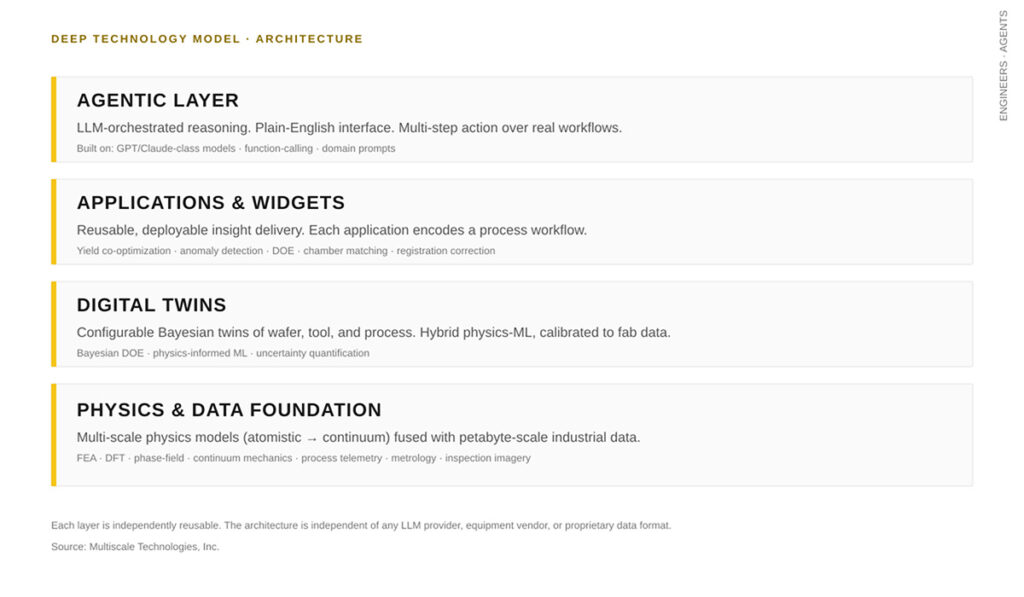

The architecture

A DTM is structured as a four-layer stack. Each layer is independently reusable; the architecture is intentionally independent of any specific LLM provider, equipment vendor, or proprietary data format. The layers, from bottom to top, are: a Physics & Data Foundation; a Digital Twin layer; an Applications & Widgets layer; and an Agentic layer. The diagram on the next page summarizes the relationships.

FIGURE 1 · DTM ARCHITECTURE

The four layers of a Deep Technology Model. Each layer is independently reusable. Engineers and agents both interact with the stack through the top.

03 · TIMING

Why now

DTMs are not a forecast. They are an artifact of a specific window in technology history — a window that opened recently, that we believe will close, and that explains why the company building DTMs first is likely to build the category.

Three forces converged in the past 24–36 months to make DTMs technically and economically viable for the first time.

LLMs crossed the technical-reasoning threshold

Until recently, LLMs were stochastic about technical content. Tool documentation, equipment manuals, defect taxonomies, and process specifications are dense, jargon-heavy, and reference-laden — and the first generations of LLMs hallucinated freely on this material. The current generation does not. It can read a fab runsheet, identify the unusual step, retrieve the relevant equipment specification, and explain the discrepancy in language an engineer trusts. This capability is the surface of the DTM. Without it, every interaction would still require code.

Hybrid physics-ML matured at industrial scale

Physics-informed neural networks, Gaussian-process surrogates, and Bayesian uncertainty quantification existed in the literature for two decades, but their compute envelope and software ecosystem were academic. The combination of low-cost GPU compute, mature autodiff frameworks, and high-quality industrial datasets has, in the last few years, brought these methods to production. A digital twin that respects the physics and learns from data — not one or the other — is now buildable, deployable, and maintainable.

Agentic systems became reliable enough to act

The third unlock is the move from agents that can plan to agents that can plan, execute, verify, and recover. The reliability bar for agentic systems in advanced manufacturing is high; an agent that recommends a setpoint change in a production fab cannot fail silently. Recent advances in tool use, long-context reasoning, and verification-by-physics have brought agents over that bar in narrow but real domains.

Each substrate alone is a feature. Together, for the first time, they constitute a new class of model.

The window is open in part because the underlying technologies are mature, and in part because the industrial conditions demand it. The senior engineers who carry decades of process expertise are retiring faster than they are being replaced. The CHIPS Act, IRA, and equivalent industrial-policy programs in the EU and Asia are funding dozens of new fabs, gigafactories, and reactor sites that need to ramp on a timeline the old methods cannot support. The intersection of supply-side maturity and demand-side urgency is what makes the next five years the formative years of this category.